Breaking News

- “Unleashed Fury: The Impact of a Potent Storm on the South-Central United States”

- “Brooklyn Hit-and-Run Tragedy: Upgraded Charges Offer Little Solace to Victim’s Family”

- “Epidemic Declared: Dengue Cases Surge in Puerto Rico”

- “Safety in the Skies: Anthony Brickhouse Experts Reassure Amidst Recent Flight Incidents”

- “The Future of Obi-Wan: Ewan McGregor’s Uncertain Stance on Season 2”

EDUCATION

11 hours ago

“Escaping Shadows: A Tale of Survival Amid Nigeria’s School Abduction Crisis”

The weary figure stood at the doorway, visibly drained and dirt-streaked. For two long years, this boy had been among…

CANADA

11 hours ago

“MC Gilles Congédié : Coup de Théâtre au 98,5 FM”

Coup de théâtre au 98,5 FM : MC Gilles, également connu sous son vrai nom Dave-Éric Ouellet, a été congédié.…

NEWS

1 day ago

“Rain Threatens Dundee-Rangers Fixture: Final Decision Awaited”

The rescheduled match between Dundee and Rangers faces uncertainty due to persistent wet weather in the Dundee area. Despite a…

NEWS USA

1 day ago

“Unleashed Fury: The Impact of a Potent Storm on the South-Central United States”

A potent storm system unleashed havoc across the south-central United States on Tuesday, unleashing heavy rainfall, fierce winds, and widespread…

Sri Lanka

2 days ago

“Government Initiates Transformation of Hingurakgoda Airport into International Air Hub”.

Colombo, April 9 (Daily Mirror) – The government has announced plans to elevate the status of the Hingurakgoda domestic airport,…

SCIENCE

2 days ago

Unveiling the Future: Mapping the Path of Solar Eclipses”

It’s always a good time to start contemplating the next solar eclipse. READ: Kristin Cavallari Spills the Tea on Her Hottest…

HEALTH

3 days ago

“Rebuilding Trust: A Crucial Component of Pandemic Preparedness”

Media outlets are sounding the alarm about the potential devastation of a bird-flu pandemic, cautioning that it could surpass the…

ENTERTAINMENT

3 days ago

“Wapakoneta’s St. Paul UCC Honors Neil Armstrong: A Celestial Tribute”

St. Paul United Church of Christ in Wapakoneta prepared for Monday’s eclipse with a special service honoring one of their…

BUSINESS

4 days ago

“Your Complete Guide to the 2024 Solar Eclipse in North America”

On Monday, April 8, millions of Americans in the path of totality will experience a momentary darkening of the sky…

SPORTS

4 days ago

“The Lakers Take on the Timberwolves: A Crucial Showdown”

The Los Angeles Lakers are gearing up to face the Minnesota Timberwolves on Sunday, marking their final back-to-back game of…

New York

1 week ago

“Brooklyn Hit-and-Run Tragedy: Upgraded Charges Offer Little Solace to Victim’s Family”

The individual responsible for a hit-and-run incident in Brooklyn, which tragically led to the death of a promising architect 16…

WORLD

1 week ago

“President Biden and Senator Sanders Unite to Tackle Healthcare Costs”

President Joe Biden and Sen. Bernie Sanders convened at the White House on Wednesday to emphasize the administration’s endeavors to…

CANADA

1 week ago

Environment Canada Issues Weather Warnings for Durham Region

Durham Region residents are urged to brace for inclement weather as Environment Canada has issued multiple weather advisories spanning from…

UK

1 week ago

“Unveiling the Risks: New Study Links Vaping to Increased Heart Failure Risk”

According to a recent extensive study in the Vaping United States, there’s now evidence contradicting the belief that e-cigarettes offer…

NEWS

1 week ago

“Meet Louie: The First-Ever Cadbury Bunny Raccoon”

In celebration of Easter candy, Cadbury Bunny has crowned its inaugural raccoon winner of this year’s Cadbury Bunny Tryouts photo…

HEALTH

1 week ago

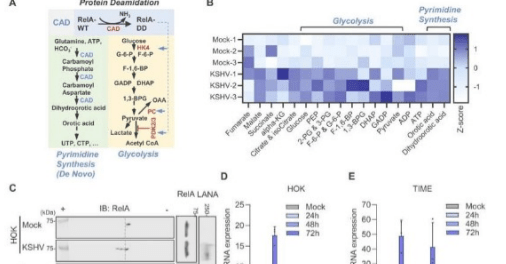

“Breakthrough Discovery: Cleveland Clinic Researchers Uncover Key Mechanism in KSHV-Associated Cancers, Paving the Way for New Treatments”

Researchers at Cleveland Clinic have identified a crucial mechanism employed by Kaposi’s sarcoma-associated herpesvirus (KSHV), also known as human herpesvirus…

TECH

1 week ago

Malicious Ad Campaigns Targeting macOS Users with Atomic Stealer and Realst Malware

A recent report from Jamf Threat Labs has unveiled a concerning trend of infostealer attacks targeting Apple macOS users. These…

HEALTH

1 week ago

Vigilance and Preparedness: Addressing Zoonotic Influenza Outbreaks

The outbreaks witnessed last year underscore the sobering reality of zoonotic influenza. They highlight that individuals of all ages are…

Enterprenuers

2 weeks ago

How Entrepreneurs Can Capitalize on the Streaming Boom

When you’re an entrepreneur, you have the freedom to pursue any tools necessary to build a profitable business. Among those…

ENTERTAINMENT

2 weeks ago

“Unveiling Alleged Romantic Entanglements: The Ryu Jun Yeol, Han So Hee, and Hyeri Saga”

A recent report has surfaced, unveiling intriguing details regarding the purported romantic entanglements involving actors Ryu Jun Yeol, Han So…

BUSINESS

2 weeks ago

“Egg-citing Adventures: Join Us at Heritage Park Petting Farm for a Spring Bunny Encounter and Egg Hunt Extravaganza!”

Come and join in the excitement at the Heritage Park Petting Farm on March 30th, 2024, for an exhilarating egg…

EDUCATION

2 weeks ago

“AI Abuse: Safeguarding Students in the Digital Age”

In the corridors of her Illinois high AI Abuse school a few weeks back, a 15-year-old girl discovered that one…

AUTO

2 weeks ago

“Unlocking Affordable EV Ownership: How Low-Income Californians Can Drive Electric for Less”

Not everyone may find switching to an electric vehicle (EV) feasible, but for some, it presents a compelling option to…

UK

2 weeks ago

Ewan McGregor Embraces the Role of Count Rostov in “A Gentleman in Moscow”

The casting speculations for the Ewan McGregor lead role in “A Gentleman in Moscow” stirred up debates among fans. While…

CANADA

2 weeks ago

Assessing Nigeria’s Post-AFCON 2024 Football Landscape: A Tale of Two Friendlies”

As attention shifts from the recent international break back to the hustle and bustle of AFCON club football, Nigerians are…

ENTERTAINMENT

2 weeks ago

Experience Life at Full Throttle

Alright speed junkies, admit it – sometimes the humdrum routine of daily life leaves you absolutely craving an adrenaline-packed rush.…

SPORTS

2 weeks ago

Navigating the Cybersecurity Maze: A Cartoon Commentary

In today’s interconnected world, cybersecurity has become a paramount concern for individuals, businesses, and governments alike. As technology continues to…

NEWS USA

2 weeks ago

“Epidemic Declared: Dengue Cases Surge in Puerto Rico”

Amidst a recent surge in dengue cases, health authorities in Puerto Rico announced an epidemic on Monday, with at least…

TECH

2 weeks ago

“Madmac: The Hypercar Drift Project Set to Shake Up the Automotive World”

Some cars are inherently better suited for Hypercar Drift drifting than others, and the notion of seeing a hypercar on…

HEALTH

2 weeks ago

“Surviving Against the Odds: Steven Spinale A Texan’s Harrowing Medical Journey”

A man from Texas, USA, has overcome extraordinary challenges after surviving a terrifying medical crisis sparked by an attempt to…